[Note from Jon: If you have either read this post annually or simply want to jump to the results without my excessive background and contextualizing, just scroll straight to the graph. Spoiler alert: These are the best results we have ever had!]

Each year I fret about how to best facilitate an appropriate conversation about why our school engages in standardized testing (which for us, like many independent schools in Canada, is the CAT*4, but next year will become the CAT*5), what the results mean (and what they don’t mean), how it impacts the way in which we think about “curriculum” and, ultimately, what the connection is between a student’s individual results and our school’s personalized learning plan for that student. It is not news that education is a field in which pendulums tend to wildly swing back and forth as new research is brought to light. We are always living in that moment and it has always been my preference to aim towards pragmatism. Everything new isn’t always better and, yet, sometimes it is. Sometimes you know right away and sometimes it takes years.

The last few years, I have taken a blog post that I used to push out in one giant sea of words, and broke it into two, and now three parts, because even I don’t want to read a 3,000 word post. But, truthfully, it still doesn’t seem enough. I continue to worry that I have not done a thorough enough job providing background, research and context to justify a public-facing sharing of standardized test scores. Probably because I haven’t.

And yet.

With the forthcoming launch of Annual Grades 9 & 12 Alumni Surveys and the opening of the admissions season for the 2024-2025 school year, it feels fair and appropriate to be as transparent as we can about how well we are (or aren’t) succeeding academically against an external set of benchmarks, even as we are still facing extraordinary circumstances. [We took the text just a couple of weeks after “October 7th”.] That’s what “transparency” as a value and a verb looks like. We commit to sharing the data and our analysis regardless of outcome. We also do it because we know that for the overwhelming majority of our parents, excellence in secular academics is a non-negotiable, and that in a competitive marketplace with both well-regarded public schools and secular private schools, our parents deserve to see the school’s value proposition validated beyond anecdotes.

Now for the annual litany of caveats and preemptive statements…

We have not yet shared out individual reports to our parents. First our teachers have to have a chance to review the data to identify which test results fully resemble their children well enough to simply pass on, and which results require contextualization in private conversation. Those contextualizing conversations will take place in the next few weeks and, thereafter, we should be able to return all results.

There are a few things worth pointing out:

- Because of COVID, this is now only our fifth year taking this assessment at this time of year. We were in the process of expanding the range from Grades 3-8 in 2019, but we paused in 2020 and restricted 2021’s testing to Grades 5-8. So, this is the second year we have tested Grades 3 & 4 on this exam at this time of year. When we shift in Parts 2 & 3 of this analysis to comparative data, this will impact who we can compare when analyze the grade (i.e. “Grade 5” over time) or the cohort (i.e. the same group of children over time).

- Because of the shift next year to the CAT*5, it may be true that we have no choice, but to reset the baseline and (again) build out comparative data year to year.

- The ultimate goal is to have tracking data across all grades which will allow us to see if…

- The same grade scores as well or better each year.

- The same cohort grows at least a year’s worth of growth.

- The last issue is in the proper understanding of what a “grade equivalent score” really is.

Grade-equivalent scores attempt to show at what grade level and month your child is functioning. However, grade-equivalent scores are not able to show this. Let me use an example to illustrate this. In reading comprehension, your son in Grade 5 scored a 7.3 grade equivalent on his Grade 5 test. The 7 represents the grade level while the 3 represents the month. 7.3 would represent the seventh grade, third month, which is December. The reason it is the third month is because September is zero, October is one, etc. It is not true though that your son is functioning at the seventh grade level since he was never tested on seventh grade material. He was only tested on fifth grade material. He performed like a seventh grader on fifth grade material. That’s why the grade-equivalent scores should not be used to decide at what grade level a student is functioning.

Let me finish this section by being very clear: We do not believe that standardized test scores represent the only, nor surely the best, evidence for academic success. Our goal continues to be providing each student with a “floor, but no ceiling” representing each student’s maximum success. Our best outcome is still producing students who become lifelong learners.

But I also don’t want to undersell the objective evidence that shows that the work we are doing here does in fact lead to tangible success. That’s the headline, but let’s look more closely at the story. (You may wish to zoom in a bit on whatever device you are reading this on…)

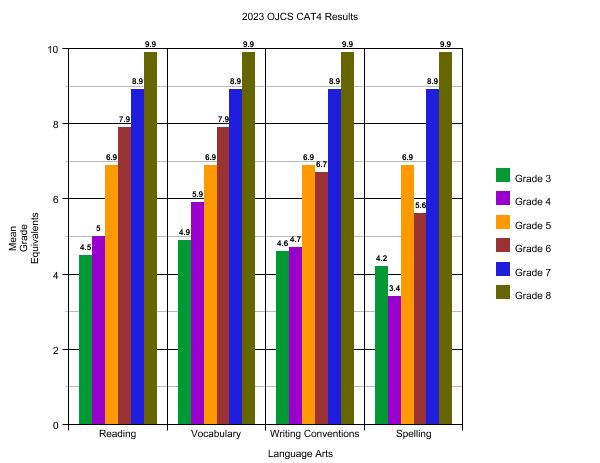

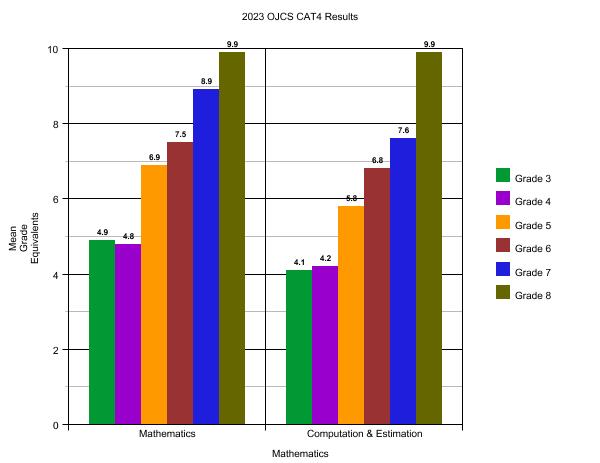

A few tips on how to read this:

- We normally take this exam in the “.2” of each grade-level year, but this year we took at at the “.1”. [This will have a slight impact on the comparative data.] That means that “at grade-level” [again, please refer above to a more precise definition of “grade equivalent scores”] for any grade we are looking at would be 5.1, 6.1, 7.1, etc. For example, if you are looking at Grade 6, anything below 6.1 would constitute “below grade-level” and anything above 6.1 would constitute “above grade-level.”

- The maximum score for any grade is “.9” of the next year’s grade. If, for example, you are looking at Grade 8 and see a score of 9.9, on our forms it actually reads “9.9+” – the maximum score that can be recorded.

- Because of when we take this test – approximately one-two months into the school year – it is reasonable to assume a significant responsibility for results is attributable to the prior year’s teachers and experiences. But, of course, it is very hard to tease it out exactly, of course.

What are the key takeaways from these snapshots of the entire school?

- Looking at six different grades through six different dimensions there are only two instances out of thirty-six of scoring below grade-level: Grades 4 (3.4) and 6 (5.6) Spelling. This is the best we have ever scored! Every other grade and every other subject is either at or above or way above.

- For those parents focused on high school readiness, our students in Grades 7 & 8 got the maximum score that can be recorded for each and every academic category except for Grade 7 Computation & Estimation (7.6). Again, our Grade 8s maxxed out at 9.9 across the board and our Grades 7s maxxed out at 8.9 across the board save one. Again, this is – by far – the best we have ever scored.

It does not require a sophisticated analysis to see how exceedingly well each and every grade has done in just about each and every section. In almost all cases, each and every grade is performing significantly above grade-level. This is a very encouraging set of data points.

Stay tuned next week when we begin to dive into the comparative data. “Part II” will look at the same cohort (the same group of students) over time. “Part III” will look at the same grade over time and conclude this series of posts with some additional summarizing thoughts.